Formula 1 Real-time Data Engineering Pipeline

Modern Data Architecture for Racing Analytics

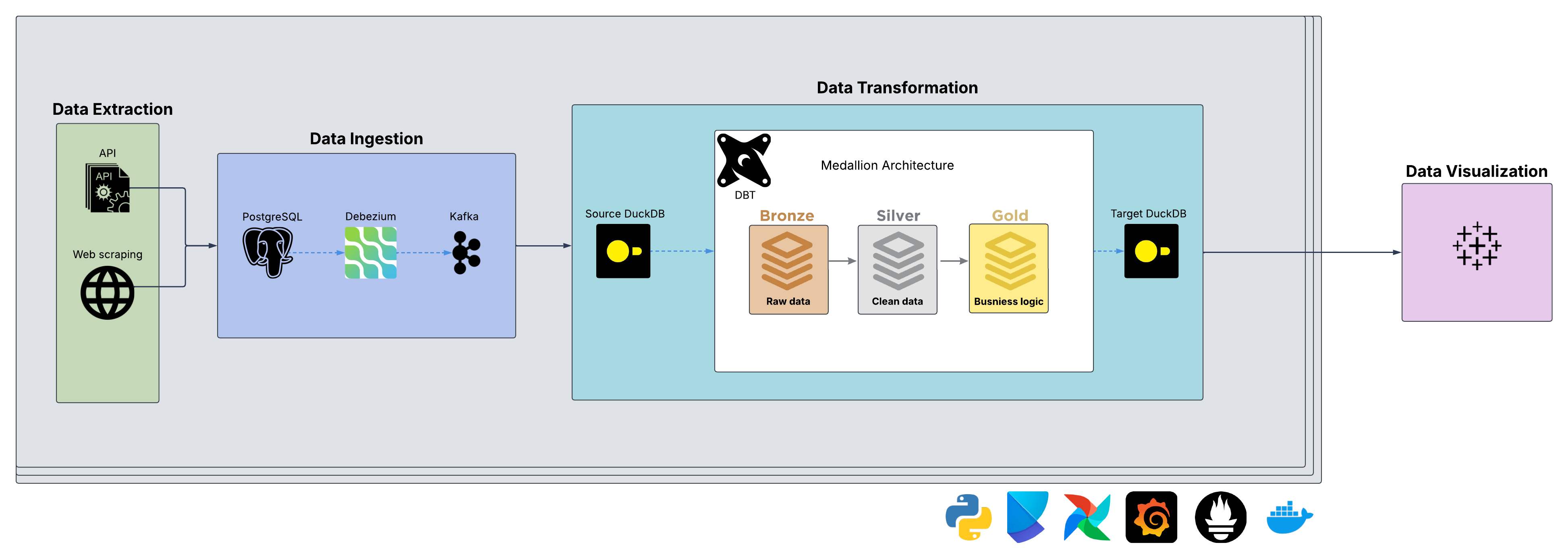

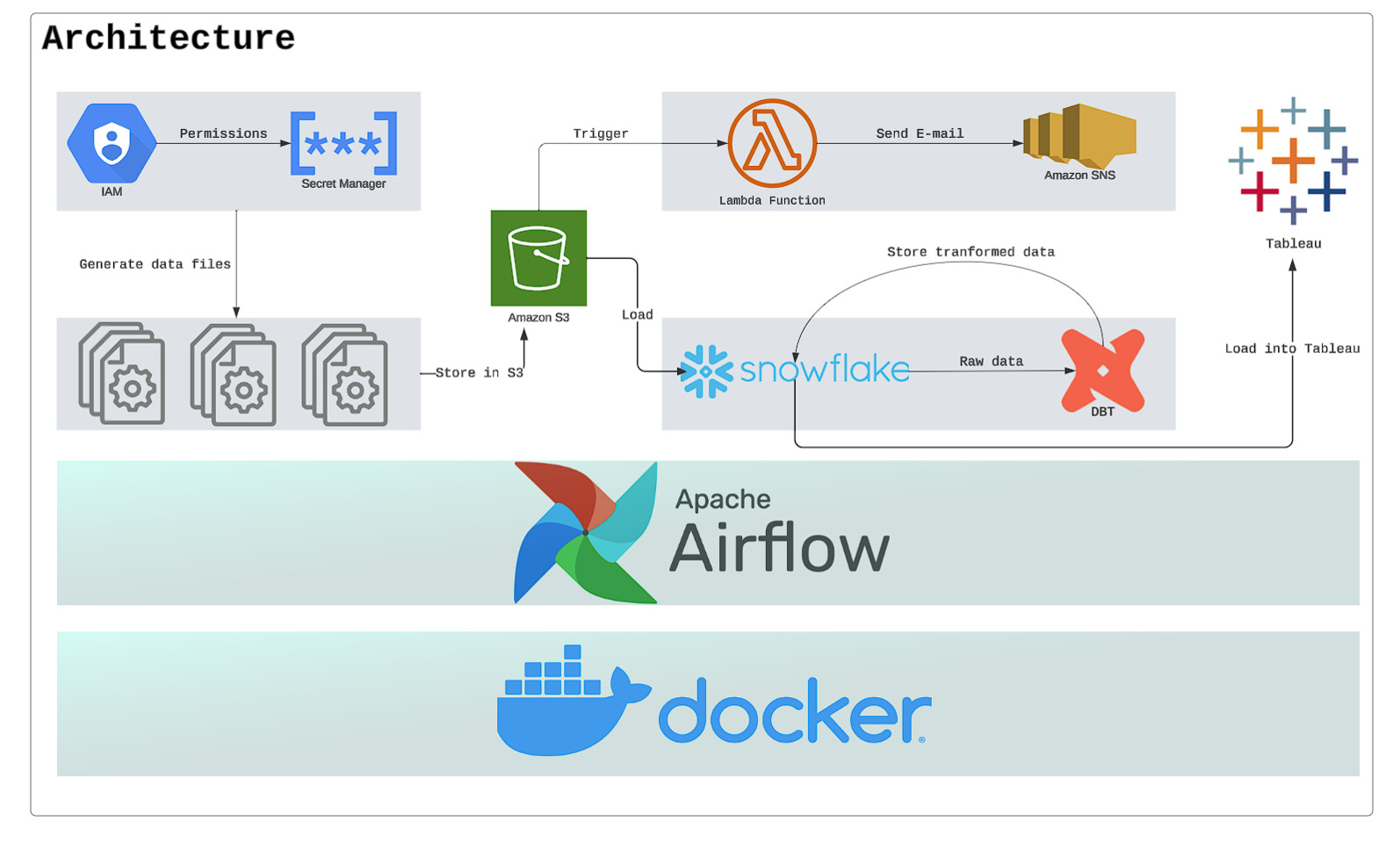

This project involves building a data pipeline that processes Formula 1 racing data using modern

data engineering tools. While the source data is batch, I implemented real-time architecture

for learning purposes. The system integrates Python for data collection, PostgreSQL for storage,

Kafka and Debezium for streaming, and DuckDB with dbt for analytics.

Project Type

Data Engineering / Streaming

Skills Applied

Stream Processing, CDC, Data Modeling

Learning Focus

Event-Driven Architecture

Key Features

- Complex data collection from multiple F1 data sources

- OLTP PostgreSQL database with OLAP DuckDB for analytics

- Real-time data streaming with Kafka and Debezium

- Change Data Capture (CDC) for event tracking

- Slowly Changing Dimensions (SCD) Type 2 implementation with dbt

- Medallion architecture for data quality management

- Asynchronous Python programming for efficient API calls

Python

PostgreSQL

Kafka

Debezium

DuckDB

dbt

Airflow

Docker

Grafana

Prometheus

Poetry

Tableau

Data Modeling